Link Metrics: Why They’re Only Indicators

There are a lot of different metrics that aim to signify the relative strength of a page or domain, particularly in relation to whether that page or domain is “good” or “bad” [for receiving a link from].

When discovering that these various metrics exist, you will likely ask one or both of the following questions:

I give my impartial view on those points further down the article. First of all, here’s a breakdown of the most commonly used metrics, in case you aren’t familiar with them:

| Metrics | Scale | Company |

| Domain Rating (DR) & URL Rating (UR) | 0-100 | Ahrefs |

| Trust Flow (TF) & Citation Flow (CF) | 0-100 | Majestic |

| Page Authority (PA) & Domain Authority (DA) | 1-100 | Moz |

Ahrefs metrics

Ahrefs have developed their metrics with the primary aim of aiding link prospecting. The idea being that a link from a website with a higher Domain Rating (DR) will typically be more valuable than from one with a low DR.

However, a study that they carried out also suggests the metrics to be a useful estimator of a website’s ability to get search traffic from Google. This due to the study showing a correlation between the # of ranked keywords a domain has and its Domain Rating.

Domain Rating (DR)

Purpose: To assess the “relative link popularity” of a given website

As the above is the aim, Ahrefs often use “Link popularity” as a synonym for “Domain rating”.

Domain Rating is a score between 0 and 100, with a higher number indicating higher “link popularity”.

Gary Illyes (Google), confirmed a while back that they “don’t really have overall domain authority“, so while this doesn’t really mimic anything specific in Google’s algorithm, it can certainly be used as a useful indicator to a domain’s overall strength.

we don't really have "overall domain authority". A text link with anchor text is better though

— Gary "鯨理" Illyes (@methode) October 27, 2016

Want to learn more?

URL Rating (UR)

Purpose: To show the strength of a page’s backlink profile

By showing the perceived strength of a page’s backlink profile, the intention is that this allows the link prospector to identify the strongest pages for them to target for their link acquisition efforts – or filter out those prospects with an apparently weak profile.

Ahrefs pride themselves on adopting similar principles to Google’s original PageRank (not the toolbar, the formula behind it):

Tim Suolo, CMO and Head of Product Strategy (Ahrefs)

“We count links between pages; We respect the “nofollow” attribute; We have a “damping factor”; We crawl the web far and wide”

“Our advice is to use it [URL Rating], but not to rely on it entirely. Always review link targets manually (that means visiting the actual page) before pursuing a link.”

This is an incredibly important point which I’ll go into in more detail later when answering the question: Why are they only indicators?

Want to learn more?

Majestic metrics

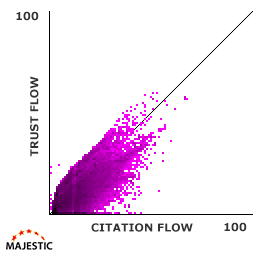

The combination (and they definitely are a combination) of Trust Flow and Citation Flow are collectively called Flow Metrics, and were first announced in May 2012.

Majestic’s aim is to show how effective a URL is likely to be based on where that URL sits in the wider web. Citation Flow aims to signify strength, while Trust Flow evaluates the trustworthiness of that perceived strength.

Trust Flow (TF)

Purpose: To predict how trustworthy a page is

Trust Flow aims to judge the quality of a page by evaluating the connection the page has with other pages which are perceived to be trustworthy.

To monitor the flow of trust throughout the web, firstly there needs to be a source of trust. Majestic have achieved this by manually assembling a large list of seed sites which they deem trustworthy.

The logic here is that trusted sites will often link to other trusted sites. Therefore, if your page is connected to these trusted seed sites, then your page is likely trustworthy too.

“Sites closely linked to a trusted seed site can see higher scores, whereas sites that may have some questionable links would see a much lower score.”

Want to learn more?

Citation Flow (CF)

Purpose: To measure the link equity or “power” the website or link carries

Citation Flow has been developed to indicate the relative influence a URL might have. It’s fairly widely agreed that Google (and other search engines) do not treat all links equally – some, due to the strength of the page they are coming from, can have a larger impact on search engine rankings. CF aims to identify which pages are more likely to be influential in this way.

It has evolved from ACRank – an old Majestic metric – and has “stronger, iterative mathematical logic” than its predecessor.

Want to learn more?

Moz metrics

Similar to Ahrefs, there is a reason why Moz have developed two separate metrics: one to judge a website at domain-level (DA) and one to judge specific pages (PA).

Dixon Jones, Global Brand Ambassador (Majestic)

“If you build a new site and only used Domain Authority to create links, you could EASILY have got linked from the worst page possible, even if it was from the best domain, because of the INTERNAL LINKS of the other web pages! How on earth are you going to be able to see the strength of a link if that strength depends on the internal links on an entirely different website?!”

So this quote from Dixon’s recent article doesn’t necessarily mean he thinks Moz metrics are useless (though I’m sure he’d prefer you used Majestic metrics). If both DA and PA are used in conjunction with each other then they can make for strong indicators.

The easiest way to determine PA and DA is to get their MozBar which gives an instant overview while browsing pages (as seen below), as well as information in the SERPs.

![]()

Page Authority (PA)

Purpose: To predict how well a specific page will rank on search engine result pages

Moz achieve their best prediction of a page’s authority by combining various metrics from their link index (such as the # of linking URLs and referring domains) to come up with a relative score between 1 and 100.

The model focuses on link data and so acts as an indicator to a page’s strength IF all other non-link aspects were equal (such as on-page factors).

Want to learn more?

Domain Authority (DA)

Purpose: To predict how well a website will rank on search engine result pages

DA, like PA, is based on different link metrics such as number of linking root domains and number of total backlinks. A higher DA is supposed to represent a stronger domain that’s therefore more likely to rank well in search engine rankings.

Moz say that it’s best used to compare against competitor scores. A really niche industry might naturally have a lot of websites with low DA. A score of 20 isn’t necessarily “bad” and may actually be one of the highest in that respective field.

“It’s best used as a comparative metric (rather than an absolute, concrete score) when doing research in the search results and determining which sites may have more powerful/important link profiles than others. Because it’s a comparative tool, there isn’t necessarily a “good” or “bad” Domain Authority score.“

Want to learn more?

So back to the questions…

What’s the difference between the metrics?

As discussed in more detail above, the metrics have quite a similar purpose, which is loosely: to determine the strength/trustworthiness of a page or domain. The differences are in how they determine that strength.

Each of the companies have their own data set and determining factors which are combined to decide the score of each domain or individual page.

Which link metric is the most reliable?

They are all reliable in the sense that they fulfil their respective purposes: to measure; to predict strength. Studies have shown that these metrics often correlate with where pages are ranked in the SERPs, which means they can be useful to get a quick and loose interpretation of the likely strength or trustworthiness of a page/domain.

It’s hard to say which is the most reliable. I’m not just sitting on the fence – it’s a matter of personal preference (and I try not to put too much emphasis on any of them). A combination can often be beneficial. One page may have an incredibly high PA from Moz but a fairly low CF from Majestic. This should make you think twice about the indicated strength of the page.

The danger is in relying too heavily on them – not because they’re a load of rubbish, but because they’re not supposed to be completely relied upon. They are only indicators.

Why are they only indicators?

Simply put, these metrics are not exact replicas of how Google determines the strength of a page, or the strength that a link will pass on. They aim to mimic this part of Google’s algorithms, based on the information and understanding each respective company has. As they aren’t a 100% accurate replica, the scores don’t reflect the strength or trustworthiness of a page or domain with 100% accuracy either.

A website isn’t bad simply because it has low DA. A website isn’t untrustworthy simply because it has low TF. A page isn’t weak simply because it has low PA. The scores indicate the likely strength or trustworthiness, they do not reflect the true strength or trustworthiness.

If used as an indicator to refine a large data set, these metrics can be amazingly useful. For example, if you’re analysing a link profile with over 10,000 linking domains and you want to quickly see what the strongest referring domains are, then filtering this down to the top 500 domains based on DA, DR or CF will make your job a lot easier. This isn’t a job you can realistically do without link metrics.

However, remember that these metrics do not account for relevance, which is hugely important. Google may well view a link from an ultra relevant page with a PA of 20/100 as being a better signal than one from a non-relevant page that has a PA of 30/100.

There’s much more to a link than these metrics are able to provide, such as:

- the position of the link on the page;

- the anchor text used;

- what the linking page is about;

- what the page being linked to is about;

- whether the link is ‘nofollow’ or not

If used without any other indicators to showcase the value of an SEO offering, or to vilify a link profile (as examples), it will often mislead because these indicators cannot show the full picture by themselves.

Adam has a background in journalism and almost a decade of experience in SEO. He’s passionate about data, content and cricket – although not necessarily in that order.